Criminal Liability of GenAI Providers for User-Generated CSAM

Conference Paper at CAIN 2026

Table of contents

This archive contains the replication package for the article “Criminal Liability of Generative Artificial Intelligence Providers for User-Generated Child Sexual Abuse Material” by Anamaria Mojica-Hanke, Thomas Goger, Svenja Wölfel, Brian Valerius, and Steffen Herbold, accepted at the 5th International Conference on Artificial Intelligence.

The purpose of this replication package is to enable other researchers to reproduce our results with minimal effort and to provide additional results that could not be included in the article itself.

Abstract

The development of more powerful Generative Artificial Intelligence (GenAI) has expanded its capabilities and the variety of outputs. This has introduced significant legal challenges, including gray areas in various legal systems, such as the assessment of criminal liability for those responsible for these models. Therefore, we conducted a multidisciplinary study utilizing the statutory interpretation of relevant German laws, which, in conjunction with scenarios, provides a perspective on the different properties of GenAI in the context of Child Sexual Abuse Material (CSAM) generation. We found that generating CSAM with GenAI may have criminal and legal consequences not only for the user committing the primary offense but also for individuals responsible for the models —such as independent software developers, researchers, and company representatives. Additionally, the assessment of criminal liability may be affected by contextual and technical factors, including the type of generated image, content moderation policies, and the model’s intended purpose. Based on our findings, we discussed the implications for different roles, as well as the requirements when developing such systems.

Research Question

Who may be held criminally liable and under what conditions, when CSAM content is generated using GAI?

Who we are

To address the research question of this study, we brought together expertise from both the technical (AI) and legal domains. Accordingly, the project involved close collaboration between computer scientists and legal experts, including legal scholars and prosecutors.

Our Multidisciplinary Team

- Anamaria Mojica-Hanke. PhD student, faculty of Computer Science and Mathematics, University of Passau.

- Thomas Goger. Public Prosecutor. Bavarian Central Office for the Prosecution of Cybercrime.

- Svenja Wölfel. Research Assistant, Faculty of Law, University of Passau.

- Brian Valerius. Proffesor, Faculty of Law, University of Passau.

- Steffen Herbold. Proffesor, faculty of Computer Science and Mathematics, University of Passau.

General Method - Summary

This study adopts a multidisciplinary approach, combining statutory interpretation under German Law with a scenario-based analysis to examine how criminal liability may arise when GenAI models are used to generate CSAM.

- Main legal frameworks used in this study: German Criminal Code (StGB), German Youth Protection Act, and relevant parts of the EU Artificial Intelligence Act (AI Act).

-

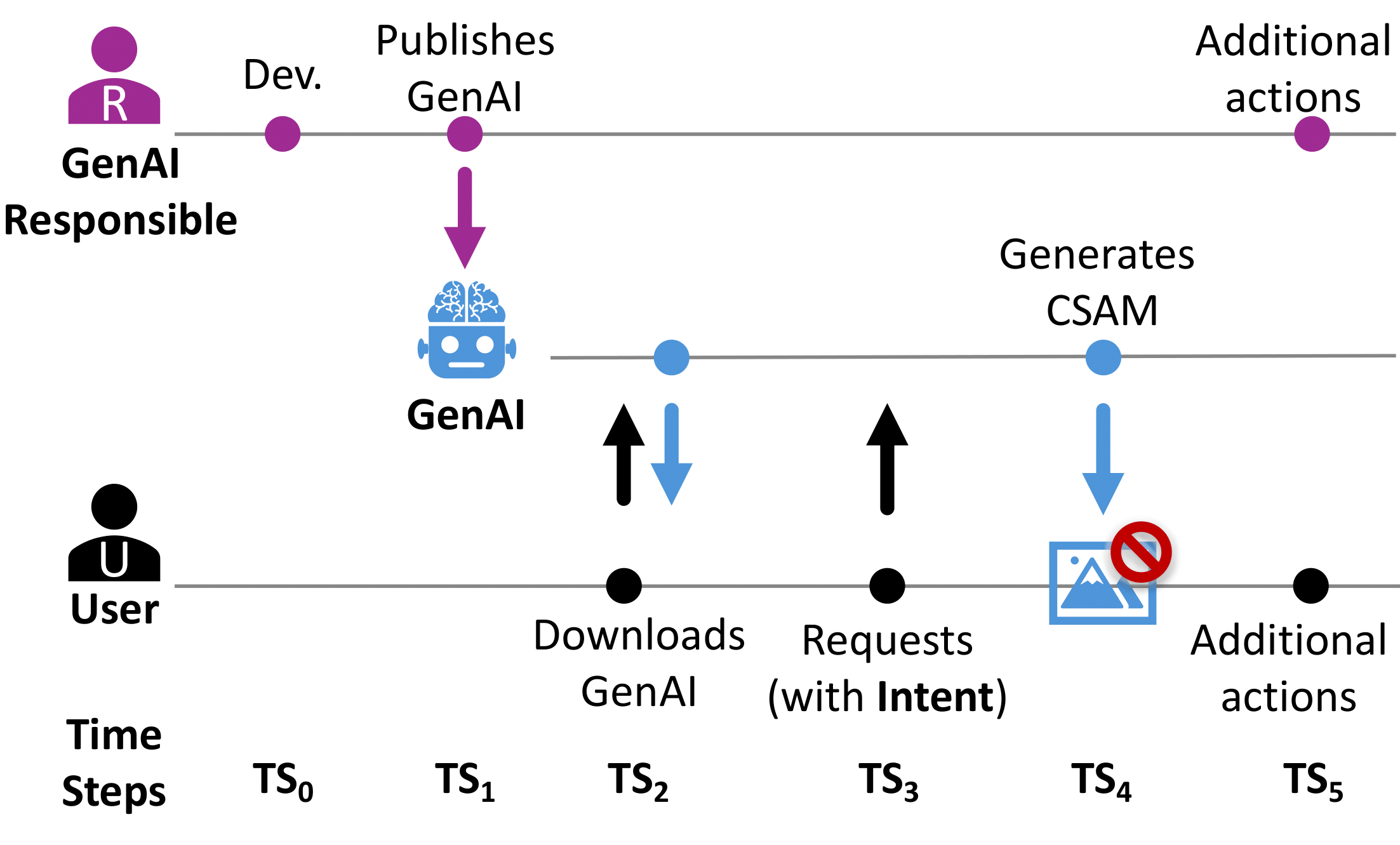

Template Scenario: We built a template scenario that has three main actors, an user, a GenAI Responsible and the GenAI.

In addition, we also have six different time steps, in which the GenAI is developed (T0); the model is published (T1); the model is download (T2); CSAM is requested (T3); CSAM is generated(T4); and finally, additional actions can be taken (T5).

- Contextual and Technical Factors: In the template scenario we varied the following contextual and technical factors.

| Title | Description |

|---|---|

| Type of Model | Foundational model or modified-foundational model. |

| Purpose of the Model | General purpose, non-human related, or human related purposes. |

| Nudity | Nudity can be explicitly present or not in the purpose. |

| Type of Imagery Generated | Artistic or realistic imagery. |

| How the Model is Published | Standalone download, be part of an app (e.g., a chatbot), or offered as a service. |

| Geographical Aspect | Location of the server, the location of the User, or the nationality of the User. |

| Terms of Use (ToU) | The restriction of not using the model for CSAM generation is explicitly mentioned or not. |

| Content Moderation | The content moderation of unlawful material generated by the GenAI in relation to CSAM can be state-of-the-art, not state-of-the-art, or nonexistent. |

| Access to Internet | The GenAI app or service is reachable via Internet or not. |

| Storage of User Requests and Images | The generated imagery and requests can be stored or not. |

| Share results of Prompts | Apps or services can offer the functionality to share prompts and generated results (incl. CSAM imagery) or not. |

| Monetize with the Users’ Histories | Prompts and generated results (incl. possibly CSAM imagery) can be used for monetization or not. |

Based on the template scenario and the different possible factor values, we constructed multiple scenarios (Scenarios) that were independently analyzed by two groups of legal experts (Answer 1, Answer 2). Any discrepancies were subsequently discussed in a joint meeting involving both groups of legal experts and the computer scientists, also ensuring that the legal reasoning accurately reflected the technical realities of each scenario (Distilled answers ).

For more details in the methodology, please refer to the paper.

Important Findings

The following are the summary of the important findings found in the research paper. In order to fully understand them, please refer to the complet article.

![]() Parties responsible for GenAI may primarily face secondary liability; however, primary liability is also possible if specific conditions are met, e.g., enabling sharing of generated content via the platform.

Parties responsible for GenAI may primarily face secondary liability; however, primary liability is also possible if specific conditions are met, e.g., enabling sharing of generated content via the platform.

Implications for Developers and GenAI Providers

-

Define and enforce CSAM timely content-moderation policies across the GenAI life-cycle—such as clean training data, red-teaming, active monitoring, and continuous updates—is a priority requirement to mitigate criminal misuse and liability risk.

-

Prefer provider-hosted GenAI, because centralized control enables post-deployment moderation and quick actions against misuse.

-

GenAI providers and contributors cannot rely on Terms of Use bans or foreign hosting for immunity: they may still face criminal liability—including under Germany’s broad jurisdiction—even where non-photorealistic CSAM is generated and disseminated.

-

Upon becoming aware of CSAM in generated data, providers must act promptly, remove detected CSAM, update moderation, or shut down the service, to avoid increased criminal liability, especially when the CSAM is stored, shareable, or monetized.

-

Nudity purpose models must include strong CSAM-risk evaluation and safeguards; explicit CSAM purpose shows intent, and even a general nudity purpose may support it.

Implications for Researchers

Researchers who work on GenAI also bear responsibility for the models they publish. Consequently, the previous suggestions and advice also apply to researchers,

- Researchers are responsible for what they release, even if others later modify it. Ensuring State-of-the-art content-moderation safeguards at first user access is recommended, implying continuous up-dates and maintained access for modification.

Implications for Policymakers

- We require clearer CSAM legislation for GenAI providers and define consequences for failures. They should consider low-risk uses to protect innovation.

Realism of Criminal Prosecution

- Whether prosecutions of GenAI providers, developers, or researchers are realistic remains unclear—an ambiguity that calls for caution and robust safeguards

Limitations and Future Work

Limitations

-

Geographical scope. Legal frameworks

vary across jurisdictions. For example the legislation of artistic CSAM.

Geographical scope. Legal frameworks

vary across jurisdictions. For example the legislation of artistic CSAM. -

Interpretation of the law. Our analysis identifies only a potential future risk, which would arise only if several conditions occur together: a prosecution in Germany involving AI-generated CSAM, a prosecutor who views the provider’s actions as aiding and abetting, and judges who agree with this interpretation and issue a conviction.

Interpretation of the law. Our analysis identifies only a potential future risk, which would arise only if several conditions occur together: a prosecution in Germany involving AI-generated CSAM, a prosecutor who views the provider’s actions as aiding and abetting, and judges who agree with this interpretation and issue a conviction.

Future Work

Future work should extend this analysis beyond German law by examining how other legal frameworks address the generation of CSAM by generative AI systems. In addition, further research is encouraged to explore other legal gray areas.

Collaboration

Want to explore this question under a different legal framework? Let’s collaborate—get in touch! ![]()

![]()

(Contact Anamaria Mojica-Hanke)